Introduction

Why Hallucinations Are Killing AI Trust

LLMs like GPT-4 and Claude are amazing at generating content—but also known for confidently stating false or made-up information. This phenomenon, known as AI hallucination, is one of the biggest blockers for adoption in enterprise environments and regulated industries.

From virtual customer support agents to medical assistants and internal copilots, reliability is critical. In this blog, we’ll walk through the causes of hallucinations, how to detect them, and most importantly, how to build hallucination-proof AI agents for production environments.

What Causes Hallucinations in AI Agents?

1. Lack of Grounded Data

- LLMs are trained on public datasets. Without real-time or domain-specific grounding, responses may be outdated or fictional.

2. Prompt Ambiguity or Gaps

- Poorly framed prompts or missing context lead to guessing.

3. No Retrieval Layer

- Most hallucinations happen when agents rely purely on their trained knowledge rather than querying factual sources.

4. No Output Validation

- If there’s no downstream fact-checking, hallucinations slip into production responses.

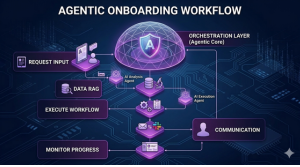

Architectures for Hallucination-Resistant AI

1. RAG (Retrieval-Augmented Generation)

Combine LLM generation with live retrieval from a vector database (like Pinecone, Weaviate, or FAISS).

Benefits:

- Injects domain-specific facts into prompts

- Improves accuracy, reduces memorization

Tooling: LangChain, LlamaIndex, Semantic Kernel

2. Tool-Calling Agents

LLMs are paired with tools (e.g. search APIs, calculators, internal databases).

How it works:

- Agent gets a query → delegates part of the task to a tool → returns combined response

3. Response Ranking & Validation Pipelines

Use a second LLM or logic-based validator to:

- Check if facts are cited

- Flag hallucinated outputs

- Replace/annotate uncertain content

Concerned About AI Hallucinations?

We design agents with verifiable outputs and real-world reliability.

Prompt Engineering for Better Grounding

Best Practices:

- Be explicit in what you want: “Answer based only on the attached policy document.”

- Add guardrails: “If unsure, respond with ‘I don’t know.'”

- Use step-by-step prompting to force reasoning

Few-Shot & Chain-of-Thought Examples:

- Add 2–3 reference Q&A examples

- Ask model to cite source doc IDs in response

Dataset Curation and Knowledge Injection

Even with RAG, the quality of data fed into the vector store matters.

Guidelines:

- Prefer structured data: FAQs, handbooks, SOPs

- Chunk docs into 200–400 token sections

- Use metadata tags for filters (e.g., department, language, version)

Tip: Use hybrid embedding (text + metadata) to improve retrieval relevance

Guardrails, Output Validators & Safety Layers

Guardrail Frameworks:

- GuardrailsAI, Rebuff, Truera for response templating

Safety Techniques:

- Threshold-based output filtering

- Toxicity, bias, or claim detection via auxiliary models

- Human-in-the-loop workflows for sensitive use cases

Case Study: Hallucination-Proof AI Helpdesk Agent

A SaaS firm deployed a GenAI agent trained on product documentation. Users received inaccurate troubleshooting steps.

Fixes Implemented by MetaDesign Solutions:

- Switched to RAG + metadata filter by product version

- Added fallback: escalate to human if confidence < 80%

- Added inline citations with source links

Result:

- Accuracy increased from 72% to 95%

- User trust improved with verifiable responses

Evaluation & Monitoring of AI Agents

Use tools like:

- WhyLabs or Humanloop for prompt evaluation

- Phoenix (Arize) for tracing hallucinations

- Custom dashboards for:

- Hallucination rate

- Response confidence

- Source citation coverage

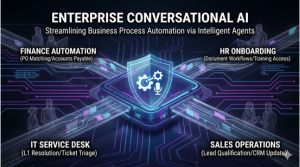

Why MetaDesign Solutions for Safe AI Development?

- Expertise in building enterprise-grade AI agents

- Implementation of RAG, tool-based agents, and validation stacks

- Deployment on AWS, Azure, GCP with compliance focus

- Custom dashboards and human-in-loop workflows

Explore our services:

- AI Development Services

- Software Consultancy

- Custom AI Agent Design

Final Thoughts: Accuracy Is UX in AI

Users won’t forgive incorrect answers—no matter how fluent the delivery is. If you’re deploying AI agents in 2025, you need them to be fact-grounded, validated, and trustworthy.

Want to make your AI agent hallucination-proof?

Book a free consultation with our expert AI systems team today.

Related Hashtags:

#AIHallucination #ReliableAIAgents #AITrust #LLMValidation #RAGArchitecture #PromptEngineering #AIInfra #HallucinationProofAI #AIProductDevelopment #MetaDesignSolutions #AIResponsibility #GroundedAI #EnterpriseAI #RAGvsLLM #AIContentValidation